[Webinar] Von Notfallmaßnahmen zu Null-Verlust-Resilienz | Jetzt registrieren

Confluent Blog

Introducing Confluent Private Cloud: Cloud-Level Agility for Your Private Infrastructure

Confluent Private Cloud (CPC) is a new software package that extends Confluent’s cloud-native innovations to your private infrastructure. CPC offers an enhanced broker with up to 10x higher throughput and a new Gateway that provides network isolation and central policy enforcement without client...

Queues for Apache Kafka® Is Here: Your Guide to Getting Started in Confluent

Confluent announces the General Availability of Queues for Kafka on Confluent Cloud and Confluent Platform with Apache Kafka 4.2. This production-ready feature brings native queue semantics to Kafka through KIP-932, enabling organizations to consolidate streaming and queuing infrastructure while...

New in Confluent Intelligence: A2A, Multivariate Anomaly Detection, Vector Search for Cosmos DB, Amazon S3 Vectors, and More

Explore new Confluent Intelligence features: A2A integration, multivariate anomaly detection, vector search for Cosmos DB and S3 Vectors, Private Link, and MCP support.

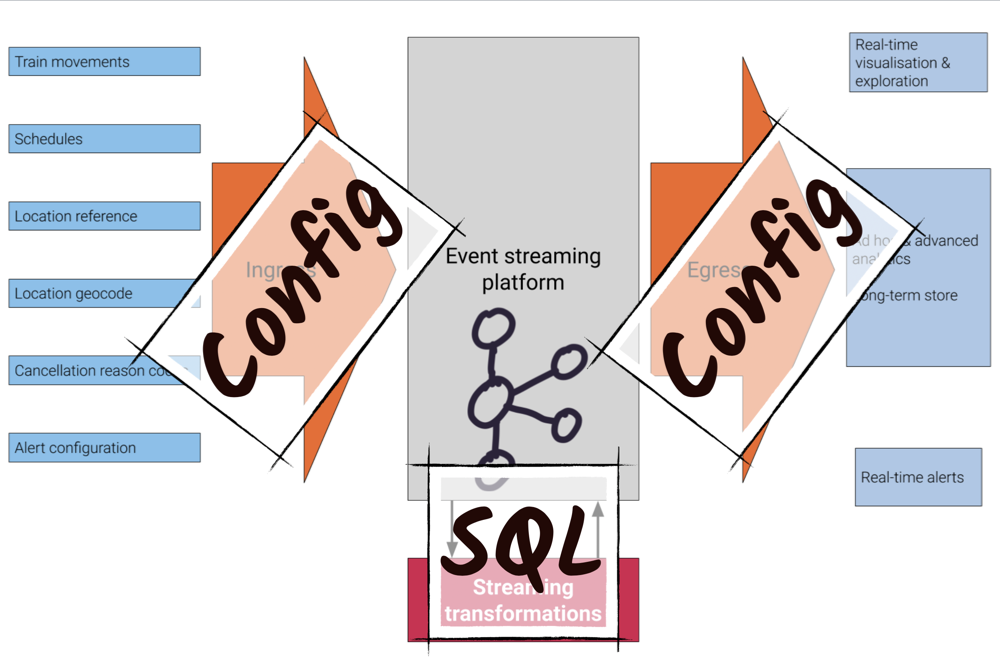

🚂 On Track with Apache Kafka – Building a Streaming ETL Solution with Rail Data

Trains are an excellent source of streaming data—their movements around the network are an unbounded series of events. Using this data, Apache Kafka® and Confluent Platform can provide the foundations […]

Internet of Things (IoT) and Event Streaming at Scale with Apache Kafka and MQTT

The Internet of Things (IoT) is getting more and more traction as valuable use cases come to light. A key challenge, however, is integrating devices and machines to process the […]

Kafka Summit San Francisco 2019 Session Videos

Last week, the Kafka Summit hosted nearly 2,000 people from 40 different countries and 595 companies—the largest Summit yet. By the numbers, we got to enjoy four keynote speakers, 56 […]

Building a Real-Time, Event-Driven Stock Platform at Euronext

As the head of global customer marketing at Confluent, I tell people I have the best job. As we provide a complete event streaming platform that is radically changing how […]

How to Run Apache Kafka with Spring Boot on Pivotal Application Service (PAS)

This tutorial describes how to set up a sample Spring Boot application in Pivotal Application Service (PAS), which consumes and produces events to an Apache Kafka® cluster running in Pivotal […]

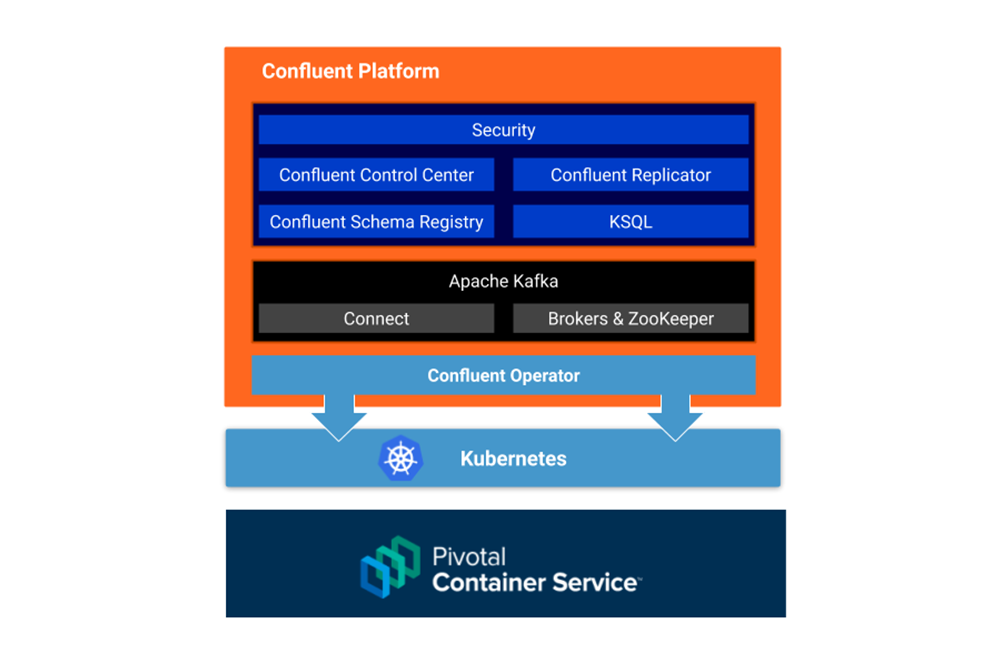

How to Deploy Confluent Platform on Pivotal Container Service (PKS) with Confluent Operator

This tutorial describes how to set up an Apache Kafka® cluster on Enterprise Pivotal Container Service (Enterprise PKS) using Confluent Operator, which allows you to deploy and run Confluent Platform […]

Why Scrapinghub’s AutoExtract Chose Confluent Cloud for Their Apache Kafka Needs

We recently launched a new artificial intelligence (AI) data extraction API called Scrapinghub AutoExtract, which turns article and product pages into structured data. At Scrapinghub, we specialize in web data […]

Kafka Summit San Francisco 2019: Day 2 Recap

If you looked at the Kafka Summits I’ve been a part of as a sequence of immutable events (and they are, unless you know something about time I don’t), it […]

Kafka Summit San Francisco 2019: Day 1 Recap

Day 1 of the event, summarized for your convenience. They say you never forget your first Kafka Summit. Mine was in New York City in 2017, and it had, what, […]

Free Apache Kafka as a Service with Confluent Cloud

Go from zero to production on Apache Kafka® without talking to sales reps or building infrastructure Apache Kafka is the standard for event-driven applications. But it’s not without its challenges, […]

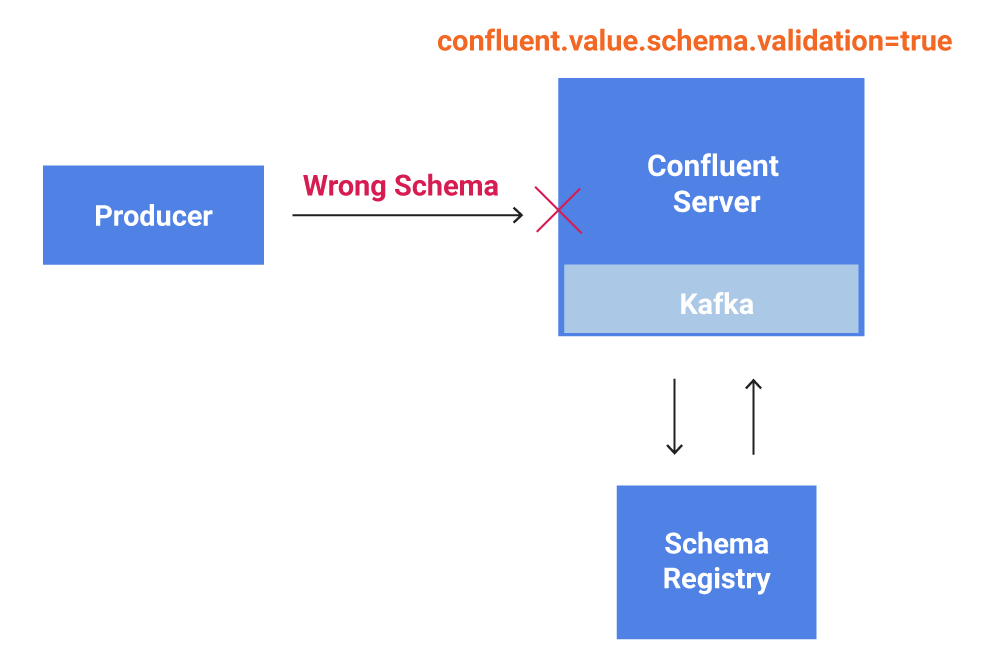

Schema Validation with Confluent Platform 5.4

Robust data governance support through Schema Validation on write is now supported in Confluent Platform 5.4. Schema Validation enables the broker to verify that data produced to an Apache Kafka® […]

Real-Time Analytics and Monitoring Dashboards with Apache Kafka and Rockset

In the early days, many companies simply used Apache Kafka® for data ingestion into Hadoop or another data lake. However, Apache Kafka is more than just messaging. The significant difference […]

Every Company is Becoming a Software Company

In 2011, Marc Andressen wrote an article called Why Software is Eating the World. The central idea is that any process that can be moved into software, will be. This […]

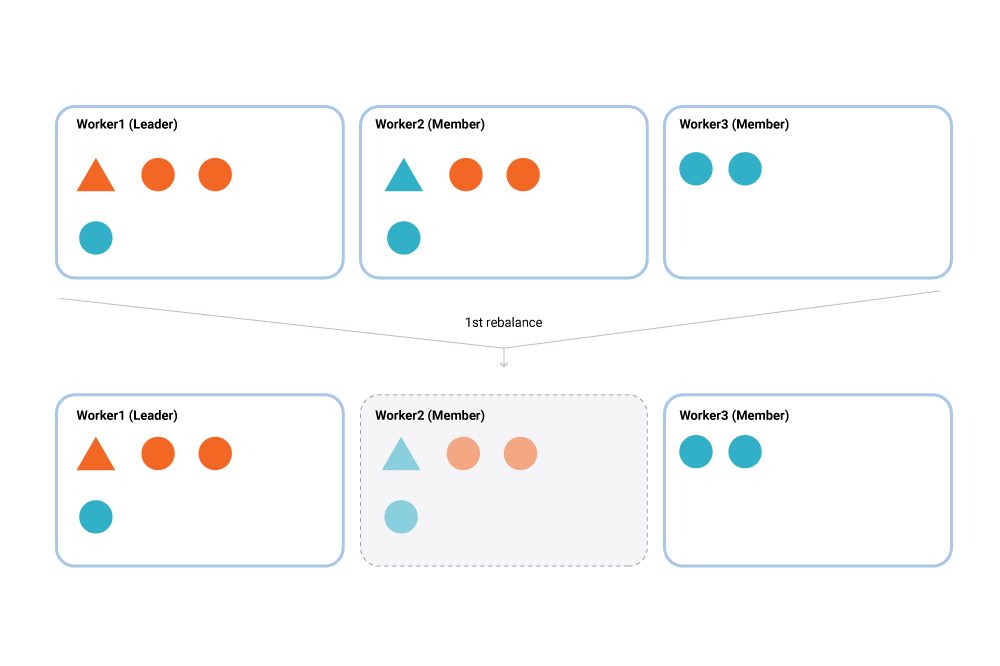

Incremental Cooperative Rebalancing in Apache Kafka: Why Stop the World When You Can Change It?

There is a coming and a going / A parting and often no—meeting again. —Franz Kafka, 1897 Load balancing and scheduling are at the heart of every distributed system, and […]